AI-assisted moderation in the fediverse is happening. Now what?

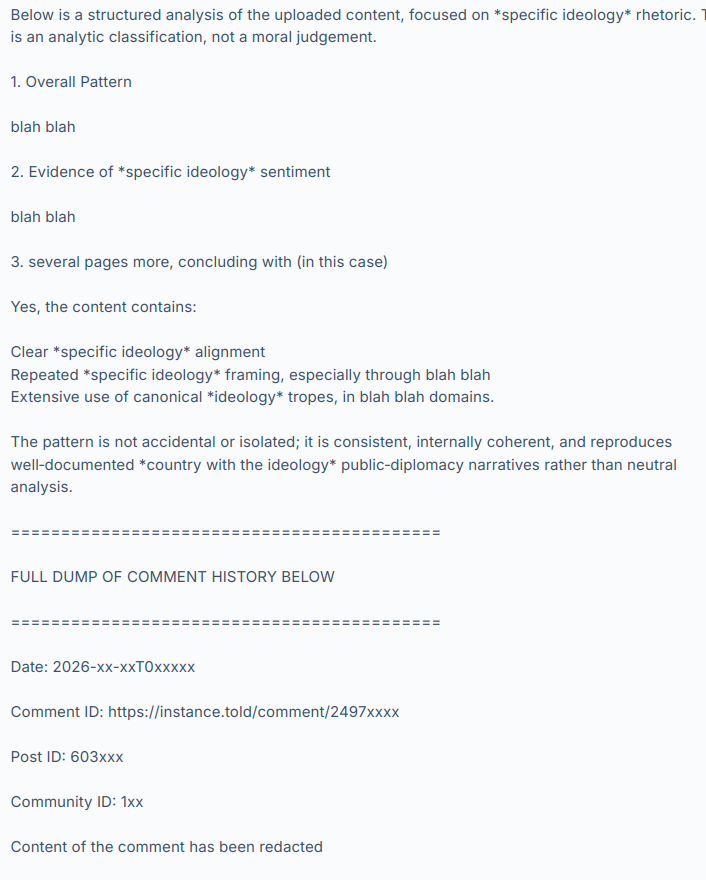

1d 8h ago by piefed.social/u/rimu in fediverse from join.piefed.socialI recently discovered that some popular federated instances have been using LLM-assisted moderation tooling that evaluates whether someone has said something bannable. They do this by running a script/app that sends the user’s comment history to OpenAI with the question “analyze this content for evidence of specific political ideology sentiment. Also identify any related political ideology tropes“. (The italic bits are where I've redacted the ideology they're seeking).

OpenAI’s LLM (they’re using GPT-5.3-mini) then responds with something like:

and so on, hundreds of comments.

I have not named the instances or people involved, to give them time to consider the results of this discussion, make any corrective changes they want and disclose their practices at their own pace and in their own way. I have also redacted the evidence to avoid personal attacks and dogpiling. Let’s focus on the system, not the individuals involved. Today these instances and people are using it and maybe we’re ok with that because it’s being used by groups we agree with but what if people we strongly disagree with used it on their instances tomorrow?

The use and existence of this tooling raises a lot of other questions too.

What are the risks? Fedi moderators are often unsupervised, untrained volunteers and these are powerful tools.

What safeguards do we need?

Would asking a LLM “please evaluate this person’s political opinions” give different results than “find evidence we can use to ban them” (as used in the cases I’ve seen)?

What are our transparency expectations?

Is this acceptable and normal?

Should this tooling be disclosed? (it was not – should it have been?)

If you were given a choice, would you have opted out of it?

Can we opt out?

Are there GDPR implications? Privacy implications? Should these tools be described in a privacy policy?

Are private messages being scanned and sent to OpenAI?

How long should these assessments be retained and can we request to see it, or ask for it to be deleted?

Once the user’s comments are sent to OpenAI, is it used to train their models?

What will the effect be on our discourse and culture if people know they are being politically profiled?

Where are the lines between normal moderation assistance tools, political profiling and opaque 3rd-party data processing?

I hope that by chewing over these questions we can begin to establish some norms and expectations around this technology. The fediverse doesn’t have any centralized enforcement so we need discussions like this to develop an awareness of what people want in terms of disclosure, privacy, consent and acceptable use. Then people can make choices about which instances they join and which ones they interact with remotely.

And of course there are the other issues with LLMs relating to environmental sustainability, erosion of worker’s rights, increasing the cost of living and on and on. I can’t see PieFed adding any functionality like this anytime soon. But it’s happening out there anyway so now we need to talk about it.

What do you make of this?

I don't like this happening, and there should be transparency in all moderation decisions, but some of these points make no sense.

There is essentially no expectation of privacy on threadiverse platforms. Everything is public and probably already being used to train models.

There is no private messaging system. Direct messages are unencrypted and potentially visible to any instance admins. They and should not be used to share anything sensitive.

Thank you for calling this out. I think people assume that since it's held by private instance owners that the fediverse is secure. I've posted this comment many times, that no, the fediverse is quite literally by design open and unencrypted.

A post is literally blasted out to anyone who listens, same with comments, upvotes, downvotes, everything can be saved, stored, and used for whatever anyone who listens wants. It should be completely assumed that nefarious agencies are currently listening and storing everything we do here. This is by design. It's the tradeoff we have of having an open platform. Anyone can spin up a server, and that means anyone.

DMs are similar, they're blasted out to the other server. If the server admin of the user in question wants to read them, they can. Lemmy/the fediverse is not a secure messaging platform. That's why the Lemmy devs literally put a Matrix handle option in the profile, to encourage people to use Matrix instead. A DM on here should be simple, to the point, and if need be, inviting them to speak on something secure.

Edit - As a perfect example of the fact that there should be no expectation of privacy here on Lemmy, as an Admin myself, I can see that @A_normy_mouse has been downvoting all of my comments here. Absolutely everything here is public and visible, even if I weren't an admin there are tools to view this, regardless of your opinions. It's imperative that everyone understand this.

Edit 2 OP as well has downvoted me. @rimu@piefed.social I'm sorry if you disagree, but it's irrelevant. Everything you do here can and should be assumed will be used in any way that you disagree with, that is the nature of the fediverse. Mastodon, Pixelfed, Piefed, Lemmy: ActivityPub is an open and unencrypted protocol. Even if it were encrypted, you still put 100% of your trust in your server admin, and beyond that each server admin you are blasting your messages out to.

I'd highly suggest accepting this fact before trying to push for rules. The very nature of the Fediverse is that no one can dictate rules, and to do that the tradeoff quite literally is that everything is open and unecrypted.

Another way to think of this. I run a server myself. I made my own rules and decided how to run it. Now your server starts sending activity to my server. That's your server's choice. I didn't agree to your rules, I may disagree with your rules, but you're sending your data to my server, of which I have complete and total ownership over. I didn't click accept on a ToS, I didn't agree to anything. Hell on my server I could literally have a "By sending me your data you accept that I can do whatever I want with your data". You sent me your data, I quite literally can do whatever I want. (Personally I won't, but that's how you should think of the fediverse)

While you are technically correct, you're implying that the "natural" state is a good enough state and nothing should be done about it.

My house has walls and a door; it doesn't mean anyone can do anything they want with this. Even if the windows are clear, you're not supposed to install a camera that watches my bedroom. Even if the door is open, you're not supposed to open. A a society it has been decided that we should respect each other, respect each other's privacy. We have created rules, some written down and some implicit, for how to interact with each other.

That is the point of OP. The "natural" state of whatever exists with the technical means, but that doesn't mean it's ok (or not ok): do we want to respect each other ? To take care of each other ? I very much want that, because the technical means should be only a means to an end, and in that end I want respect. The technical means, to me, must adapt to the end, not the other way around.

I mean with the fediverse your house becomes more like a library, more still, everyone gets a copy of what you have that they never have to return. You can ask people not to do x or y with the text but at the end of the day there is nothing you can do to enforce it, sans defederation of course. But the main sticking point, data being fed to a 3rd party LLM, is moot if we're talking about openAI which already crawls lemmy.world. Or in fact any website intended to be found via search engines (and then some). Anything you post on lemmy.world (the instance hosting this thread e.g.) will already be added to the chatGPT training set.

lol @ Rimu downvoting your post. Be careful he’s probably going to make a hit piece against you next!

Or just delete them entirely from piefed.social social 😂

That's what he does when he doesn't have anything he can say against you.

Idk. This and previous threads just lead to them saying well you just can be trusted or why don't you believe me over your lying eyes

Those removal happened in the context of a mod calling Rimu a zionist (which he's not)

It didn't happen out of nowhere.

He's been doing that for a long time.

Those removal happened in the context of a mod calling Rimu a zionist.

Is that supposed to make it better?

Is that supposed to make it better?

Rimu is not a Zionist. It's this wrong accusation that escalated the tensions. I'll clarify my comment.

That didn't address anything even with the clarification as it's his go to response. He's been doing it since the instance was stood up

In this specific case, even the dbzer0 admin agreed it was undeserved:

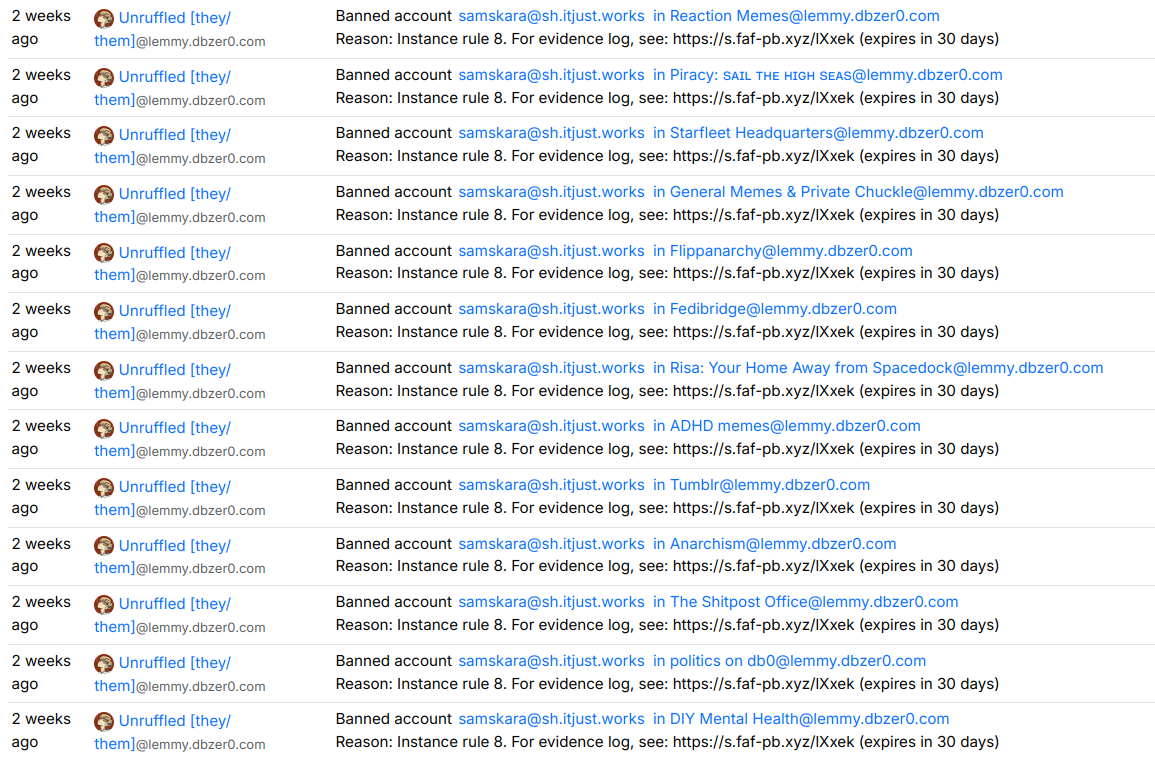

https://lemmy.dbzer0.com/modlog?userId=7015938

Which other removals from Piefed.social are you referring to?

What are you talking about? It's nothing to do with db0 and rimu. Rimu will delete accounts off piefed.social social if a user displeases them or mwog tells them too.

How did you get to db0 banning rimu out of rimu deleted the accounts of people they don't like?

Btw with this and the statistics post it may be better for everyone if rimu was still banned

Edit, oh just using rimus talking points to also distract. Blaze please please please stop being star struck by rimu

https://lemmy.world/comment/23565992

Blaze is here to defend Rimu at any cost, even ignoring everything you are saying.

I love Blaze, they're regularly upstanding. I don't know why they are really willing to go to bat for Rimu.

I’ve only seen them complaining tbf. Everytime I make a comm he’s there to try to get everyone to use his one instead.

He's trying to move people off of .world which I like. And he's previously defended us from when MWoG would make shit up, like Rimu/.world/feddit is now.

I just don't understand why he supports the obvious intentional issues

You're hyperfocusing on one point, as if that's the only part that matters and ignoring all the rest. I don't consider that helpful, hence the downvote.

What is especially unhelpful is abusing your admin access to call out people's votes. Leave that shit alone.

That is quite literally my point. Everything, absolutely everything here is open and can be used however any instance owner wants. You can say "leave that shit alone", but there is no obligation to whatsoever.

You should assume every instance owner can and is viewing all of your private data, sending it through whatever LLM/mod tools they want. Are they? Probably not. But they can, and there is no obligation not to.

And there is no obligation to federate with you. Please don’t publicly discuss users’ votes.

You are correct, and it's what I was suggesting op do if they don't like what other admins do. It's right there.

As for viewing votes there's a whole site dedicated to showing everyone's votes, it's called Lemvotes. Feel free to say please, but people are still going to do it. Votes and everything elsewhere are very much public, best to get used to that now.

As for viewing votes there’s a whole site dedicated to showing everyone’s votes, it’s called Lemvotes

Which is why we’re not federated with it. Pasting lemmy.ml links returns a 404 error.

Great. You show me an 10 foot fence I'll show you an 11 foot ladder. Go ahead and audit every server that federates with you, send them a questionaire. There's absolutely nothing stopping the next lembotes from setting another server up, and there is nothing stopping any three letter agency from setting up their own listening server.

Yeah you can do that but now you're on my do-not-trust list. And probably a few other people's lists.

I appreciate you being open about your opinions because now I can make an more informed choice about interacting with you and the instance you run.

Don't you think everyone deserves the information they need to choose which instances they want to interact with, according to whatever criteria is important to them? Even if your criteria are different?

GOOD. NO ONE should be trusted here! I'm just some guy who decided to spin up a server, there should be zero trust! THIS IS MY POINT.

Don’t you think everyone deserves the information they need to choose which instances they want to interact with, according to whatever criteria is important to them? Even if your criteria are different?

This depends on the trustworthiness of the admin themselves, and even then every admin is just some person who decided to spin up a server, just like me. Trust is built and earned, it shouldn't be implicit. The option you have is to defederate, or leave and join another server.

I'm really not trying to be an asshole here, but your post is what caused me to do this. This is not a unique post, this is a fundamental core principal of the fediverse that every user must understand. That by being here, it is not a private secure place, you are quite literally blasting every comment, post, and upvote, to whoever wants to listen. Literally everyone. Any semblance of privacy is purely a UI trait. Rules/guidance is purely 100% based on what each server owner chooses.

That's a stupid take, you're basically shooting the messenger here.

Stop throwing a tantrum like a child. You ranted. You were explained why your tantrum is pointless. Move on.

It's absolutely wild that the obvious needed to be pointed out at all, and that the reaction to it was 'you just made my list, buddy'.

Yeah you can do that but now you’re on my do-not-trust list.

Are you J Edgar Hoover? Anyone who gives you mild criticism must be tagged and marked for distrust?

This is the person calling you a tankie. Someone so afraid of words that they need a hallucinating robot to hold their hand and confirm that everything is a secret plot against them. The absolute only way I could see this being useful is for something like trying to sniff out if a Lemmy.world mod account is a leftist infiltrator or not.

You could maybe run a speech pattern comparison but that’s it. For everything else you just made Stupid Reddit and the purpose of their forum is to feed training data to ChatGPT so that it can profile Fediverse users.

abusing your admin access

Everyone has admin access, including you....

I don't think I do.

You're hyperfocusing on one point, as if that's the only part that matters and ignoring all the rest. I don't consider that helpful, hence the downvote.

Huh? What exactly are your expectations here, that everybody addresses every point in every comment? You just listed like 2 dozen points of discussion in the op, every comment would be an essay. Scrubbles has a good point that should honestly be foundational to the discussion, and they're being respectful, so I really don't understand what your problem is here.

If you really wanted their take on your other points, instead of downvoting you could've just asked for it. You know, have a discussion? Or just let it stand alone, it's still a valid take.

What is especially unhelpful is abusing your admin access to call out people's votes. Leave that shit alone.

Anyone (anyone) can be an admin of their own instance, there's absolutely nothing exclusive about it. Hell you don't even have to go through the work of doing that, there's other tools. Lemmy/Piefed are super open, by design.

Anyone (anyone) can be an admin of their own instance, there’s absolutely nothing exclusive about it. Hell you don’t even have to go through the work of doing that, there’s other tools. Lemmy/Piefed are super open, by design.

And any admin can ban someone or defederate from someone’s instance for doing it.

Lemmy/Piefed are super open, by design.

If Lemmy could have kept the votes hidden, it would have, but the nature of federation precludes it. So instead is does the best it can to not make them obvious. In the case of lemvotes.org, lemmy.ml is defederated from it so that it doesn’t have access to our votes.

Our instances voted not to defederate from lemvotes, so votes are effectively public.

What is especially unhelpful is abusing your admin access to call out people’s votes. Leave that shit alone.

Agreed. This is poor form, and ban-worthy if continued.

It's occasionally worth calling out that votes are also public. I think twice before hitting those buttons

Why would you care if anyone knows how you vote on comments?

The entire add industry has been collecting preferences, likes, dislikes for decades. Its one of the most profitable pieces of information

No data is as useful as what makes you personally engage.

Not OP, but the votes being public (not only on comments but also on posts) make it really easy for someone with malicious intent to generate a profile on your interests, political and sexual orientation, health/mental issues, addictions and so on. It's a goldmine of data that should be protected.

This only makes sense if your account contains personally identifiable information. If it doesn't, then what can really happen?

It's not that hard to identify people online. My account is definitely not private

Yes, but then you are willingly accepting the risk of posting in a fully public forum anyway. What I'm saying is, you could, if you wanted, not have personally identifiable information on your account.

The risk associated with being on a public forum has changed massively. Yes the data was always out there, but the ability to turn it into personally identifying information was not.

People are still grappling with that change.

What I'm saying is that's actually very hard unless you run a super sterile account on purpose. Even just your writing style is a pretty good fingerprint. Your IP. Any pictures you've posted. It's a rough world out there for privacy

That's true, but this person also knows they are not hiding. There are countless others that don't. That's the reason they wrote what they wrote.

Okay, so, my first Reddit account was back when I was a clueless teenager. I posted all sorts of information that, in retrospect, was pretty foolish to post, including my specific location, my personal interests, and different clubs and organizations I belonged to.

I was using a pseudonym, of course. And I thought I was being safe by not giving my real name, my real address, or anything that I thought could identify me specifically. But there were probably hundreds of people in my high school who could have identified me by correlating my different posts and profiling the one person with that particular combination of interests and organizations.

And if I was still using that account, it would absolutely be possible to link me, security conscious as I am, to my high school self, and link that to my LinkedIn account. And quite possibly get me fired for my clueless teenage shit posting 😆

What I'm getting at is, one, lots of people do post personal information. And two, PII is a much broader category than people think, and if your account has a long post history you probably gave up a lot more information than you think you did.

You could still be identified by a lot of factors and the combination of those. IP address, email if provided, cookies + referrer on clicked links or loaded external images, browser fingerprint, clues from actual content in comments and posts, ... It's not that hard, a whole industry lives on this kind of surveillance data collection.

this gets into another thing with me, but I don't care for public voting at all. I would vote a lot if it was private to me and only effected my feed.

Sometimes people ban based on votes, so some might worry about that?

There's also those creepy people that take it upon their next fine hour to crawl through people's histories. Trying to find anything that could boost the height of their soapbox and distressed egos. It always backfires, obviously, but it doesn't take away from the fact that some really weird people are here and no one wants to have to deal with them.

Occasionally people have meltdowns and accuse/threaten other users for daring to vote a certain way, presuming specific motives for doing so

Sometimes you get harassed by lunatics.

.ml I call you out.

https://lemvotes.org/comment/lemmy.world/comment/23550342

here's your comment

First fo all: I don't like this either.

There is no private messaging system. Direct messages are unencrypted and potentially visible to any instance admins. They should not be used to share anything sensitive.

Agreed, but that admin is breaking his promise, duty, responsibility (call it what you will) if they then upload these messages to an LLM for evaluation.

I would argue for this being actually illegal, at least under the GDPR.

But that was just one of many potential conflicts @rimu raised. We should concentrate on the real conflicts of LLM comment moderation.

edit: yes, I have actively downvoted all comments I disagree with under this post (and upvoted all I agree with). I don't usually do it so much, but this post is a sort of opinion polling.

It's very clear on signup, on the READMEs, even on the DM portal itself, that messages are unencrypted and there is no sense of privacy, and that admins have full visibility and can do what they want with them.

Agreed, but that admin is breaking his promise, duty, responsibility (call it what you will) if they then upload these messages to an LLM for evaluation.

There is no promise, duty, or responsibility that an admin has beyond legal and what they themselves promise. The fediverse is great in that if you disagree with your admin, you are free to leave and choose a different one.

As for GDPR, feel free to argue it, but when it's claimed at every turn that messaging is unencrypted and basically open, well, I don't think it'd hold up. It literally says to go use Matrix or something else.

you are free to leave and choose a different one.

I only have that freedom if the admin tells me that they use LLMs in this manner or if they federate with instances that do. At the moment everyone is in the dark.

and it will continue to be. Again, you need to understand this. There are no rules, guidelines or anything that an instance owner needs to follow beyond whatever legal requirements they have in their specific jurisdiction.

So, I guess in your pervalence, you are correct, you do not have that freedom. Even I, as an instance owner, do not have that freedom, because everything I'm typing here is being sent out to as many servers are listening too. By being completely open so that anyone can spin up a server and listen for activity, it literally means that we are open and any server can listen for activity.

Anyone can spin up a server, create some LLM bot, and start replying to anyone they want. That instance can be defederated of course, but that is the only tool. This is what you signed up for, this is the open and free internet. We do not have any walls here.

Check this out - https://www.structural-integrity.eu/the-politics-of-decentralization-and-the-libertarian-allure-of-mastodon/

by definition, that is adding walls and gates to the fediverse, which is why this whole thread started. The fediverse was specifically designed to oppose those features at all, specifically because of what we're seeing now. Gates have keys and gatekeepers, and as we've seen in the greater world, those can change hands in the blink of an eye.

only recourse is to move one’s account to another place in hopes of finding more benevolent admins and more protective content moderation. Being able to move one’s account to another place within Mastodon is usually praised as a feature. When it comes to digital safety however, it is not. It relegates minorities to a digital life on the run, hoping for safety in a system that has no mechanism to actually enforce it.

Maybe some day we'll come up with a technical solution that allows communities to group-administer fediverse servers, but until then, most of us are depending on the goodwill of server admins who let their users access services for free, and do all the work of maintaining the server. Being able to vote with your feet and create your own server seems so different than a "digital life on the run".

The idea of a fediverse alliance where admins group together and create a community between instances is a good one though. There is a start to that with stuff like fediblock, but it is still mostly in the benevolent dictatorship model of governance, which I think is hard to get around since it is a lot of work to administer a server, even moreso if it were democratically run, and most of us users depend on free access.

You're a fucking AnCap? That explains soooooo much.

Wait, why do you think Rimu's an AnCap?

The Cyberlibertarianism bs and its links to crypto and what not.

It looks to be be a criticism of these, not in support

Maybe you should read that post before commenting. It's anti-AnCap at its heart.

Yep

Not sure about ancap, I think he's just a plain old liberal, maybe a neoliberal.

You should assume everything you post here is being used to train LLMs. It doesn't take an admin to do so. It takes anyonr who feels like looking. And there's already evidence that we're being scraped.

To expand on standards of transparency in moderation decisions:

Lemmy was built with a public moderation log by design. The ethos of the platform includes accountability through transparency. Every action is recorded and preserved (short of defederation or instance shutdown).

This makes moderation auditable. Mods literally cannot do (much) shady stuff in secret. In essence, moderation policy is discernable from the logs. That's part of why well-run communities have the rules clearly defined and mods follow their written policy.

If a community/instance wants to make political alignment a moderation offense, they're free to do so. Many communities/instances are quite explicit about this. If a community wants to make moderation completely arbitrary, they are free to do so. That is somewhat less common, but also not unheard of.

In truth, any community can be designed and moderated in any way whatsoever that the mod chooses.

However, the success of a community depends on the quality of the content and the quality of the moderation. Good content brings people in, but bad moderation drives people out. When the moderation is unfair, it is bad for the health of the community, and ultimately bad for the health of the platform.

It is my experience that transparent moderation, such as announcing changes in policy, techniques, etc., is less work in the long run. It takes a bit of time and attention to roll out changes when they are open for community feedback, but that feedback will come in one way or another. If mods don't provide a formal outlet, then users will make one. Mods operating opaquely give up their right to have the conversation on their time and terms. They also miss out on the wisdom of the crowd. I've been in many situations where community feedback provided a valuable insight or tool to face an obstacle through open discussion about policy.

All that being said, one of the major obstacles to growth of the Threadiverse is the woeful dearth of moderation tools. It's extremely time intensive to do basic things like identifying alt accounts, vote manipulation, bot behavior etc. It is also subject to a lot of human error. This makes it discouraging for people to moderate. I have heard about tools that use AI to detect CP content and remove it quickly, which I think we can all agree is a good use of the tech. Tools like this are not built into the platform, but cobbled together by volunteer mods and admins to keep the platform safe, legal, and sustainable. If they were built in, then moderation would be far easier (and therefore likely better).

I have heard about tools that use AI to detect CP content and remove it quickly

i think we can thank db0 for those as well

No AI is bad, db0 is an evil instance Chairman Rimu has decreed it.

That's presumably (and hopefully) not GenAI, but a much lighter classification model built with the sole purpose of judging if an image is problematic, I have no problem with those.

Water is wet. Zionist disapproves of Zionist moderation.

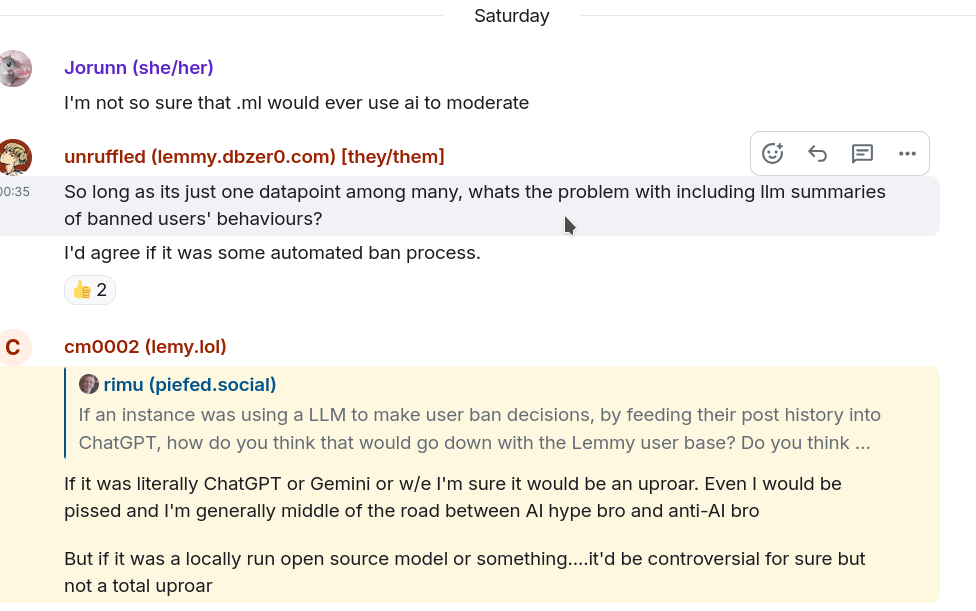

You talk about instances utilizing this tooling, but in your comments you admit it's just some mods. This is misleading, as talking about instances doing it assumes admin access and relevant instance policy, something which invites calls for defederation (as can be clearly be seen from the comments in your post).

A random mod doing something is not the same as an instance doing it. Literally anyone can be a mod and they don't get any more access than an anonymous account by doing so.

This is the second time in one week I see you throwing careless statements like chum in the water. I can't help but notice a pattern emerging.

If the instance admins tolerate this, they are also responsible for it.

Are they maybe unaware? I wouldn't point fingers too quickly...

It's unruffled, an admin.

If they

dbzer0 is explicitly pro-AI, so this “controversy” is a nothingburger to them (and me).

Communities about Anarchism, Generative AI, Copylefts, Neurodivergence, Filesharing, and Free Software. (And Math!)

unlike your instance admin which not only tolerates but amplifies defamatory screenshots of other instance admins which can be debunked very easily https://lemmy.dbzer0.com/post/67963752/25781975

Is this whataboutism?

Its sorta relevant since this in an ongoing smear campaign to manufacture consent for the defederation of db0 but don't worry the precious fedditors are safe from seeing their heißgeliebtes De*tschland being called mean names while it collaborates with a genocidal occupation regime and other things that might not get printed in Der Stern.

As an instance admin, you should ban those mods.

Why?

I suppose if you have an instance wide policy against AI moderation, then any mod using AI for moderation is going against the rules. But what anyone "should" do on their own instance is really up to them.

It breaks the OpenAI boycott, and the tool can be abused to get excuses to ban someone the mod wants banned for personal reasons.

It breaks the OpenAI boycot

There’s no reason to assume that it’s using OpenAI.

the tool can be abused to get excuses to ban someone the mod wants banned for personal reasons.

Why make decisions based on such a hypothetical? And anyway, any mod automation tool can be abused in the same way.

Dr. Hans-Georg Moeller: Guilt Pride: A German Vanity Project Conquering the World

Sometime in the 2000s, a group of mostly Turkish women from an immigrant group called Neighborhood Mothers began meeting in the Neukölln district of Berlin to learn about the Holocaust. Their history lessons were part of a program facilitated by members of the Action Reconciliation Service for Peace, a Christian organization dedicated to German atonement for the Shoah. The Neighborhood Mothers were terrified by what they learned in these sessions. “How could a society turn so fanatical?” a group member named Nazmiye later recalled thinking. “We began to ask ourselves if they could do such a thing to us as well . . . whether we would find ourselves in the same position as the Jews.” But when they expressed this fear on a church visit organized by the program, their German hosts became apoplectic. “They told us to go back to our countries if this is how we think,” Nazmiye said. The session was abruptly ended and the women were asked to leave.

https://jewishcurrents.org/bad-memory-2

Heul leise

Ahh sorry - I just checked more closely. It's an admin doing it.

And your recent post showed that there's no such LLM-based admin tooling and you just misrepresent what the tool that is there, does...

I think this will exemplify the beauty of federation. If I find out my instance mods are running all of my comments through a company’s ai model, I’ll switch instances. This is in great disparity to something like Instagram or Snapchat where every photo I post is immediately fed to ai and my only options are: be okay with it, never post, or delete Instagram.

Mods of any instance you're federated with can do this

They don't need to federate even, they're probably already scraping everything.

Although it should only matter if you chose to subscribe to that community where the mod is in charge.

Sure, but we all know how power mods be.

Obviously, but unless the modlog is being spammed with many entries, and I receive notifications for each one individually (which is actually happening right now from communities on dbzer0, and unlike Lemmy, PieFed actually sends notifications for such events, but I will set that sub-topic aside for s moment), then in theory I do not care if I am (preemptively?) banned from let's say c/conservative@newreddit.com or c/extremetankiedeathsquad@thereallemmy.fuckallwesterners (to be clear, these are hypothetical made-up names!🤪), if I never wanted to post or vote on their content anyway? They are even doing me a favor if it prevents it from showing in my feed (which it would on Lemmy iirc, but on PieFed it would not).

But you don't even need to be a mod to do that. Anyone at any moment can run someone else's entire comment and post histories through an LLM.

Also true. It is called social media, so I’m pretty okay with anything happening to any of the comments/posts I make. All the same, I do expect vaguely better behavior from moderators.

Seeing that every single post we make is completely public there is a high chance someone out there already used all your comments for training an AI model. As you say, the only thing you can do is just not post anything anymore.

this is what reddit does, and destroyed thier communities and left it with bots on most subs. reddit also lets you hide your history so you cant sniff out bots/ or chronic spammers.

Yep.

Unless somebody manages to ... inject a hostile/unauthorized LLM as a mod or admin or something, in an instance they're not an admin of, in a comm they're not a mod of...

Then people react by personally blocking or perhaps instance wide defederating or maybe conceiveably someone actually uses this in a generally good way, to identify trolls/sock puppets.

As to... LLM scraping of comments?

Lemmy is public, anyone can do that.

I've done it to myself with a local LLM hooked up to a search engine, and I'm not a mod or admin of anything.

Hence why you probably should use a pseudonym and not give too much information about yourself, if you're concerned about privacy... same... rules the internet has always had.

I suppose that instances could implement various anti-scraping measures, but that's never going to be 100% effective as scrapers vs anti-scrapers has also basically always been a constantly escalating arms race.

I don't think the privacy issues here are too salient. Pretty much everything on the fediverse is public already and have likely federated outside any particular region like the EU, so GDPR doesn't really have any teeth. The exception to that would be if instance admins are using database access to also feed private messages to an LLM (especially a corporate LLM). I know that the "private" in private messages on the fediverse can be conditional...but it should at least be considered private from LLMs as an expectation since those messages are inaccessible to things like scraper bots or listening instances designed just to harvest data.

My biggest concerns here would be twofold:

- False positives - LLM sycophancy is a thing. So, I worry that if you ask an LLM to dig through a big pile of text looking for a thing, that it will tell you that it found that thing...even if it is completely removed from context or completely made up. The false positive rate might be low (I have no idea), but I guess I just don't trust the LLM enough to let it take the wheel with stuff like this.

- Outsourcing moderation - LLMs are not going to be up to the task of moderating everything, just ask digg. However, tools to help moderators effectively do their jobs are helpful as well. There is a balance to be struck here. I think, for me, something like asking an AI essentially, should I ban this person, just feels like you are outsourcing your decision making too much. It is too far on the automation side of the scale for my tastes.

All that said, people can run their instances how they want. I don't really have strong opinions on LLMs/AI in general, I just kinda hate big tech companies. That is my foundational belief in the work that I do for the fediverse - fuck big tech and the oligarchy they have built/funded in my country. That is really the only axe I have to grind in all this.

No that is not correct, GDPR requires you to list the reasons and partners you share the data with.

So like

- We share data with any server that federates with us.

?

Federation is something different

Not from a legal perspective

It is. The federated Data is the same public data available through the website but delivered in a protocol for interoperability. It is not non public accessible data they share with a third party to compute or profit further.

What non-publicly accessible data could a mod that screens comments, possibly federating from other instances, share with OpenAI that OpenAI doesn't already scrape?

We can see in the screenshot what data they use: date, comment it, community id, post id, comment text.

See there is the major difference in between pulling in data and pushing in data to do some kind of computational work. But as someone else already pointed out, if they anonymize the data and don't send the usernames together with the text, it should actually be fine again.

What's the difference? Seems to me pulling in data lets them build a way better profile than some mod handing out crumbs or?

Well he is the service provider his user signed up to, so if he actively pushes data anywhere it needs to be in check with his privacy agreement stated to the users, which is supposed to show where and why the data is handed.

And if all the AI does is manually flag a post for human review?

Wthout going into the issue itself, it is such a ridiculous waste to use an llm for something that a far simpler model could do like 100x faster and locally for essentially free...

Just search for "machine learning text moderation" and you will find all kinds of options. Not to talk about the fact that a simple 4B LLM could do this as well.

One thing I really hate is how LLMs have completely overshadowed the entire ML/AI field and people just use them for everything.

Using a trillion parameter LLM model for basic text moderation is like using a gaming rig to play candy crush.

I've been doing a lot of work around this area and the issue tends to pop up around context. There are instances a haiku model will catch something far more accurately than gpt-oss-safeguard. Obviously you pay in costs, realistically, you need a full system here for a proper implementation so some flows to tiny LLM some flows exclusively ML, some flows to a higher intelligence.

People on the fediverse are generally pretty anti ai but it's basically impossible to scale a platform without AI moderation. I would fully welcome any instance trying an implementation of [Osprey](https://discord.com/blog/osprey-open-sourcing-our-rule-engine with LLMs.

I want to train a local ML system to recognize my personal handwriting. Is that possible?

Yes, a Convolutional Neural Net could do it or a plain Neural Net even.

You'll want to create a sample of your handwriting with the letters isolated into their own picture and labelled. Maybe 10 of each letter to start with? If you get bad results with that make more samples. Each picture should be the same size as all the others. The starter course of Machine Learning I took way back when had us using a database of labeled numbers, each picture was 10 pixels by 10 pixels.

Then pick a CNN model (or better yet several) and train them on your handwriting. You can find some here: https://huggingface.co/models?other=CNN

Pick the one that does best. As part of that course I mentioned, I created an evolutionary algorithm to mutate, combine, and propagate CNNs to find out the best configurations for identifying images. The ones that performed the best got to combine with other top performers.

You might also be able to find a CNN specific to handwriting and then fine tune it to yours with your samples.

This is doing it raw and will have a lot of education for you along the way. There may be some prebuilt handwriting model you can just fine tune with easy instructions from the person who made it all wrapped up into a nice bit of python for you. Maybe.

Defederate, no question.

Are you gonna tell us which instance is doing this?

OP already answered this: not at the present time.

You stay far, FAR away from that shit, is what you do.

Scanning people's entire history for political leanings, etc? That's some deeply dystopian stuff right there.

It's easy to forget that these sorts of communities are dictatorships with only as much transparency as the owner wants to share. Usually they're benevolent dictators, so we don't think about it too much. But they can change in a heartbeat - and we don't ever really know what they're really thinking, or doing behind the scenes.

When the mask slips and they reveal this sort of thing, thinking we'll just accept it and keep living under their rule, it's time to read the red flags and GET OUT.

Hopefully someone compiles a list of places that do this stuff, so we can avoid them like the plague <3

Scanning people's entire history for political leanings, etc? That's some deeply dystopian stuff right there.

Yep. It's Cambridge Analytica and Palantir level shit.

Don't give it too much credit. It's Reddit level shit. Current models are so good at providing the kind of reports mods want because Reddit's automated mod tools have been running these assessments on hundreds of thousands of users for years and feeding the results back as training data.

And let's be real, a tool that assesses the public posts of a specific account isn't doing anything different than mods already did. (Not to mention users - how many people, when they get into an online argument with someone, start going through their post history to find something to gotcha them with?). The LLM just does it faster.

All of that was already happening, because all Lemmy posts & comments are public.

Aside from the ethical implications of profiling users or of using a corporatly owned server and model to execute this, I see nothing uniquely concerning about this practice that isnt already a risk of federated social media generally.

Every mod on every instance is free to use whatever tools or standards for moderation they want - that's an intentional byproduct of federation. Similarly, the collection of this data for use with llms is a bygone conclusion at this point - there was never any way of preventing that from happening with a federated network.

I think the only thing here to talk about is the way these questions are being framed as a question of intra-instance policy. We already have communities where moderation abuse can be called out and adjudicated- why pose this as a question of instance administration when there doesnt seem to be any evidence for it?

these questions are being framed as a question of intra-instance policy

I think this is just more of the ongoing controversy being spun up against db0.

This weeks flavor appears to be more data driven, a 'just asking questions' phase. I guess in hope the whole 'falsifying evidence to make db0 users look like neo-nazis' thing blows over.

Like it's clear there's an effort to rid db0 from the fediverse, and it's just the pretext hasn't been sorted out yet.

I don't doubt it even for a second.

I'm lowkey kind of fascinated this morning with what feels like a moment of real panic among western liberal-democratic institutions (projecting a little from my morning news and coffee). That an anarchist instance is getting this much targeted harassment feels like a microscopic extension of that (if I allow myself to be so bold)

As far as I can tell, dbzer0 isnt even being explicitly called out here, but it has an undeniable bdzer0 flavor to it. If it doesnt come out that this was one of our mods at this point, I'd almost be disappointed.

Yep and it doesn't help that Rimu himself is a very questionable dude. He says he defederated from lemy.lol over their pepe frog logo but honestly I don't believe it is the reason/ He hates memes. He said as much to me himself. A while back, someone realized that piefed was hard coded to give negative reputation to certain people, regardless of what settings the admins had made. Piefed is built off of Rimu's opinions and he puts the majority there as an opt-out, not an opt-in. When I made a jokingly apology for all the memes I post, he said "It's not your fault that the lemmy devs didn't put guardrails in."

If he's that opinionated about memes...

Combine that with the fact that this post has literally no information other than "Trust me bro"? I don't trust Rimu as far as I can throw his garbage platform and I can't even throw the thing because its digital code. Hell, Mr. Kaplan even had to do a ton of un-fucking of Piefed to get piefed.world to work and was talking with me about it at the time. Just LITTERED with Rimu's stances.

Not to mention that Rimu routinely comes in with preconcieved notions that others have to point out the bullshit of and he quietly then steps back from it or refuses to engage entirely and dismisses it out of hand.

Like... the Lemmy devs suck, absolutely. But the only facet in which Rimu is better is he's not a transphobe.

Edit: See below for screenshots. I'm tired of this shit. Yes. He's wildly opinionated.

Edit 2: Lol this comment is not visible on piefed.social. Rimu out here screaming about fiefdoms and hidin things critical of him.

hates memes

builds reddit clone

That's worthy of a meme in and of itself.

A while back, someone realized that piefed was hard coded to give negative reputation to certain people, regardless of what settings the admins had made.

Please don't spread old mis-info or at least back this up with actual links to the source-code (and if we are talking about the same thing, this was clearly debunked).

As for the OP post, this is factually correct and I have seen the evidence. Although maybe Rimu should have been more clear in pointing out that this seems to be not an official instance tool, but rather something some moderators have cobbled together themselves.

Please don't spread old mis-info

I mean, you just did it. The OP post is not factually correct as he stated that it is at an instance level. It is not. It is at a moderator level.

Please don’t spread old mis-info or at least back this up with actual links to the source-code (and if we are talking about the same thing, this was clearly debunked).

Gotta say though, getting real tired of people telling me that I didn't understand conversations I was a part of. No. It was not 'debunked'. It was added under an opt-in toggle after everyone noticed it and called out his bullshit. You might be thinking of something else, but this is what I was talking about.

Piefed is infected with Rimu's extreme opinionated garbage and he only backs down and puts them into a toggle after someone notices it. That's not the behavior of a developer that I find personally trustworthy. So when he's out here making a post that is outright false, claiming that instances are doing something that moderators are doing, I don't trust him. Not to mention the extremely long conversations I had with Mr Kaplan about how Piefed.world needed to be un-rimu'd in order to work as LW wanted it to.

I was referring to a different but similar case where someone intentionally spread mis-information about supposedly hardcoded things that turned out to be a complete nothingburger as all of it was behind an admin toggle. The same seems to be now true for this old issue you specifically pointed out here.

It is true that there is some experimental stuff in Piefed, which is part of the relatively rapid iteration of features, but looking at the code and also the explanations given by the Piefed development team I can really not see any malice in those settings. It is perfectly normal that things get overlooked or implemented partially and when someone reports a bug (like a missing admin configuration setting) it usually gets fixed quite quickly, and at least in my experience without much discussions.

The same seems to be now true for this old issue you specifically pointed out here.

But it isn't. First off, you made an assumption and dismissed my initial complaint. Now you're dismissing this one saying it's basically the same thing when it isn't. Having a long discussion with large admins saying "Hey. What the fuck is this stuff?" only for Rimu to constantly push back and saying how he wants to reshape everything is fucking concerning. The fact it took everyone pushing back against him to add it under a toggle even more so. You'd have a point with the whole "this happens" if this didn't happen with every single major Rimu feature.

But, once again, Rimu is actively pushing misinformation and you have dodged the point that you are doing the same. This is not an admin or instance level problem. Moderators are doing this and claiming this is "instance level" is to be a liar.

It is perfectly normal that things get overlooked or implemented partially and when someone reports a bug (like a missing admin configuration setting) it usually gets fixed quite quickly, and at least in my experience without much discussions.

And, as we all know, your experience is the only universal experience that everyone has at all times. I guess the month long conversation I had with Kaplan, head admin of Lemmy.world, about unfucking Piefed because Rimu filled it with his opinionated garbage didn't happen. I guess the fork of Piefed being created that's taking out all of his opinionated garbage didn't happen. Not to mention his 4chan screenshot scanner (that can be bypassed immediately), the cm0002 filter he put onto piefed.social (that can be bypassed immediately), the blocking of any numbers of 88 put together (which can be bypassed immediately), the private votes that would prevent admins from locating vote brigaders (which can be opted, granted, but after a HEATED discussion in the piefed matrix).

Rimu is over opinionated to a fault and Piefed is the same.

You know nothing of which you speak.

But, once again, the only important point is that Rimu is actively spreading misinformation by claiming this is an instance problem when it is moderators that is doing it. Once again, with action after action, Rimu cannot be trusted.

I guess the month long conversation I had with Kaplan, head admin of Lemmy.world, about unfucking Piefed because Rimu filled it with his opinionated garbage didn’t happen.

So Kaplan knew about the "garbage", but still wanted to go on and keep running Piefed.world?

Either Kaplan's judgement is supposed to be relied on, or not, but that argument seems weird to say the least.

which can be opted, granted, but after a HEATED discussion in the piefed matrix

So it is opted. That people have to convince Rimu to make changes isn't really an argument. People have to also convince the Lemmy devs to make changes on a regular basis, with less success.

So Kaplan knew about the “garbage”, but still wanted to go on and keep running Piefed.world?

Either Kaplan’s judgement is supposed to be relied on, or not, but that argument seems weird to say the least.

Others are doing the same thing. This isn't "weird" or bad judgment. I don't like Kaplan but they did nothing wrong here.

So it is opted. That people have to convince Rimu to make changes isn’t really an argument.

Yes. If you skip over the entire problem, it does appear as if there is no problem. My issue is that he had to have his feet held to the fire over something that minor because he did not disclose it in the first place. If someone keeps trying to sneak shit past my face and then keeps backing down and going "Uwu sorry I add opt out" but then doesn't add opt out for the other opinionated stuff that was not discovered yet, why would I applaud them? They're still doing the bad thing.

Others are doing the same thing. This isn’t “weird” or bad judgment. I don’t like Kaplan but they did nothing wrong here.

What I'm saying is that even though admins know there were some opinions in the software, they still started Piefed instances. And that's in a world where Lemmy exists.

In other words, every instance admins assesses whether the additional Piefed features make it worth it to accept the opinionated aspect of the software.

That's the main value of Piefed here, and that's why so many people prefer it compared to Lemmy. All the features people wanted, be it users, mods, admins, Rimu delivered them, and fast.

They’re still doing the bad thing.

Doing something, even imperfectly, but listening to the users, still seem valuable to a lot of people. Some people obviously dislike it a lot, and expect the software to be written be a perfect person, but the devs behind Lemmy, Piefed and Mbin all have their flaws.

What I’m saying is that even though admins know there were some opinions in the software, they still started Piefed instances. And that’s in a world where Lemmy exists.

I did not state whether or not Piefed.world was created before or after it was found out that Piefed is littered with Rimu opinions. You are making a conclusion you simply cannot make.

In other words, every instance admins assesses whether the additional Piefed features make it worth it to accept the opinionated aspect of the software.

He said, off of a false conclusion from a random assumption.

Doing something, even imperfectly, but listening to the users, still seem valuable to a lot of people.

Brother, you are not listening to a word I have been fucking saying.

He's not doing it imperfectly. HE'S BEING DISINGENOUS. If I were to talk to you and give you a ton of shit, fill all of it with some useful stuff but the rest is hyper opinionated, you'd expect me to tell you what was opinion and what isn't. Rimu doesn't. People have to discover it and then hold his fire to the flame. That is not listening to feedback over things that you knew was a problem. Especially when he knows it is opinionated and then puts it through anyway without informing anyone, without making a note of it, and expecting others to comb through his garbage and hold him accountable. And you are saying that is perfectly acceptable behavior?

Brother, you have lost essentially all of my respect here. As stated elsewhere, because you started two conversations with me at once, I am not continuing this with you.

Piefed is infected with Rimu’s extreme opinionated garbage and he only backs down and puts them into a toggle after someone notices it.

I personally prefer Rimu taking feedback into account and then making changes than Lemmy devs bluntly closing feature requests widely upvoted by the community because "reasons"

Let's be honest here, it's not like there are so many options we can choose from.

Also, the example you gave about shows more than anything else that Rimu did change his mind.

"All the settings in this screenshot are off by default"

He only changed his mind after someone discovered it. The thing I don't like is his obfuscating his opinions that he's injected into Lemmy. Having to manually discover them and then bug him about adding an opt out toggle is not exactly trustworthy behavior nor is it something I'm going to applaud. He still tried to sneak it in regardless and only backed down when called out. I'm not giving him props for that.

Rimu is like Microsoft Windows.

Adds in things the higher ups felt was needed. Users then discover it and say this is a bad thing to include in software.

Microsoft then says "right our bad, we should have thought twice on this"

Microsoft then does it again a few weeks later, repeat.

Comparing a single open source developer to one of the biggest tech corporations worldwide doesn't really seem relevant.

Adds in things the higher ups felt was needed.

Who are the higher ups in this case? There's no Piefed board of management or investors to answer to.

All of that being said, I'm looking forward the day Pievolution can be run easily, then every instance can make their own choices, and these endless debates will end.

The ironic thing is that people claim the Lemmy devs will do that to Lemmy. And here it's Rimu doing it with Piefed.

He also has silenced my account from piefed.social. None of my comments are federating to that instance yet there is nothing in the modlog about me. So, Rimu is out here complaining about the modlog problem but he's actively sidestepping it. He's silencing users without any reason. So... he's shadowbanning people.

Are we SURE he isn't Spez?

The code is open source, nobody bothers to read it. If that's supposed to be obfuscation, then that's not really effective.

The same arguments are made when an overwhelming amount of paperwork is dumped on someone during discovery in a trial. It's not a good argument then and it's not a good argument now. If you have to go through and check every single thing he's done to find the hidden things he's put in there without announcing then that is not transparent. You simply cannot change the fact that Rimu overwhelmingly puts his opinions into Piefed as a whole and then leaves it for everyone else to find the opinion and then tell him to add a toggle. He fights against it before eventually adding a toggle. Meanwhile, damage has already been done for however long with his garbage running rampant without anyone noticing it.

If he is not willing to point out what is opinion and what is code then I am not willing to fact-check every piece of his work to find what is code and what is crap. He's being disingenous and underhanded.

I am not continuing this conversation.

Although maybe Rimu should have been more clear in pointing out that this seems to be not an official instance tool, but rather something some moderators have cobbled together themselves.

This isn't an issue of clarity. His closing call to action is to 'develop awareness so that people can choose which instances to join and interact with'. There aren't any practical administrative solutions to the problem being called out, with the exception of defederation or the threat thereof. Any single user on the entire fediverse can copy-paste user activity into any LLM and use the output to make moderation decisions, or craft personalized agitprop or whatever else, but centering the focus on instances that allow their usage turns the issue into a nail that can be solved with a hammer.

You are jumping to conclusions. I think it is generally worthwhile to discuss the use of LLMs for making moderation decisions and also using them to produce ideological profiles of users.

The worthiness of a discussion has no bearing on the intent and framing of the person prompting it.

The questions are being raised by the same person who included global reputation scores in his backend piefed code for the purposes of suppressing his personal pet peve behaviors. I find that to be informative context for considering the intent of the discussion being prompted.

edit: Oh look, here he is saying exactly what I was just pointing out was likely the intent

I’m lowkey kind of fascinated this morning with what feels like a moment of real panic among western liberal-democratic institutions (projecting a little from my morning news and coffee). That an anarchist instance is getting this much targeted harassment feels like a microscopic extension of that (if I allow myself to be so bold)

As far as I can tell, dbzer0 isnt even being explicitly called out here, but it has an undeniable bdzer0 flavor to it. If it doesnt come out that this was one of our mods at this point, I’d almost be disappointed

These type of posts are why people (from all walks of life) view western leftism as more of an aesthetic, performative thing.

Right - which is why it was quite interesting watching Fox news have a 20 minute power struggle over the sudden popularity of May Day in the US and the rise of ""extreme socialist sentiment""

All online political discourse is performance - feel free to speculate how well it is representative of IRL leftist spaces in the west.

From my time in the US, what you describe seems like a pretty run of the mill local rhetorical strategy; a tried and true American polemic if you will.

Sure, but even with something like online political discourse you can do better than getting obsessed over a bastardized provincial political term (liberal which means something completely different outside of the US) and providing cover for promoters of russian and Chinese genocidal imperialism and propaganda. We are not talking about a deep discussion on philosophy and political economy, just the absolute basics.

Can't speak for your definition of leftist spaces, but many (not all of course) self-proclaimed western leftists IRL have a very performative approach to imperialism (among other things).

Sure, but even with something like online political discourse you can do better than getting obsessed over a bastardized provincial political term (liberal which means something completely different outside of the US)

Sorry, I'm having a hard time following the train of thought - are you referring to my use of 'liberal-democratic institutions'?

I don't want to word-vomit on you unnecessarily if you're pointing to something else or speaking broadly about leftist discourse on lemmy (i've seen plenty of debates like the one you're describing)

and providing cover for promoters of russian and Chinese genocidal imperialism and propaganda

Ok well now i'm even more confused - where is this jab coming from?

Maybe you're taking issue with my categorizing db0 as a leftist space and are speaking broadly about the perspectives about china and russia from that instance? What are we talking about here?

liberal which means something completely different outside of the US

Not really, both imply right wing pro-capitalist beliefs. American Liberals just tack 'progressivism' onto their meaning to steal some left clout.

So right wingers complaining about leftists is why people view western leftism as performative?

Honestly sounds like maybe people should be taking issue with the complaining right wingers there.

whats funny is I don't have much negative dbzer0 experience until you two guys start making this about that.

That's an odd takeaway, for sure.

Is this a negative db0 experience for you?

im just saying when there is a particular thing and someone starts pulling in something unrelated as a conspiracy it leaves a bad taste. Its a bit like folks suddenly saying something about lemmy.ml being so and so in an unrelated type post that gets me to do my posting in their communities but in a reverse kinda way. rimus last post about a trend he saw I think it had some interesting perspectives and few if any where that the instances were ban happy. Similarly this one has some good conversations going.

unrelated

Well, it is noting that there's now at least an appearance of a relationship going on. Especially so, if you're already clued into the 'ban-happy' statistics magic thread.

Similarly this one has some good conversations going.

Hopefully those continue unimpeded and this can remain contextual or tangental.

this one did not name any instances. paranoia is the only reason to link them or knowldge that the two things are indeed connected. Each is fine to me though. Bringing up data and slicing and dicing it a bit is fine and the convo brought up issues with the way it was massaged. Similarly this is about a particular thing. Niether indicated an opinion or want for some sort of action against instances. so yeah. unrelated. but yeah I like good and vibrant convo which is why I have enjoyed both posts. one thing im not wild about this is some comments suggest its an admin thing and should be discussed in some admin community but im not wild about the cigar filled room type thing.

Statistics are what racists use to justify hate of minorities. Statistics are very easy to abuse and misuse, Rimu has used them to push a narrative when the reality is db0 was only high because of 1 moderator issuing a lot of bans which he used to frame an entire instance as ban happy.

Rimu has confirmed this is about db0.

The answers to these kinds of issues is never disclosures or ToS or admin vigilance. It's always technical. Everything which is technically possible will become normal.

Lemmy is not popular because it is a well designed piece of technology. Frankly it's a pretty naive implementation of activitypub. It's popularity comes from being the biggest alternative around when Reddit pissed off a good chunk of its users.

The only way to control how data is used, is to make it technically or practically impossible to do so. Until then, expect all the data on the fediverse to be used in every way possible for any purpose, and act accordingly.

I don't see a technical or practical way to limit - let alone render impossible - AI moderation tools that is not at odds with decentralized open-protocol social media.

If you can copy-paste user activity into a textbox, this remains trivial.

I recently discovered that some popular federated instances have been using LLM-assisted moderation tooling

First of all, I agree with your main point, that this* is problematic, wrong even (and should not happen).

But I need to ask: how did you find out? Is this something that could be traced objectively, or did some people report/admit it?

Are they uploading stuff to corporate LLMs, i.e. LLMs that do not run themselves? (I think you answered this already when you wrote OpenAI, but I want this spelled out)

Are only admins or also mods doing this? That would make a big difference.

I'm also a little unclear about the process: are they uploading (copy-pasting) the actual comments, or links to them? To what extent can all this be automated on Lemmy/Piefed etc.? I.e., are there admin tools that just spit out all of a user's content?

* again: specific political profiling outsourced to LLMs. OTOH we already have instances that do this manually.

But imo the process is deplorable even if they use LLMs with different prompts for modding.

It can be traced objectively.

It's mods.

They're using software that they made to do this quickly and easily, it's not a manual copy and paste situation.

Sure bro, keep on making shit up, I guess? By the way I have removed all the community bans for yourself and Skavau. You only had to ask btw. Might have saved a lot of pointless drama.

It can be traced objectively.

Can you elaborate?

Also, in another comment you said it was admins, not mods? Or both?

It's at least one admin, we don't know how widely it's used. It does have the logo of a group of 3 instances (Fediverse Anarchist Flotilla), so it seems to be made to be used by many people in an ongoing way.

More details at: https://piefed.social/c/fediverse@lemmy.world/p/2035379/proof-of-ai-assisted-political-profiling-by-unruffled-lemmy-dbzer0-com

I.e. "I just saw a modlog entry, jumped to conclusions, didn't ask for clarification, didn't speak to anyone from that instance, jumped straight into making a drama post"

Correction, three drama posts.

Nah. I discussed it with Unruffled days ago. Here's a screenshot from Matrix in the Threadiverse Admins room:

If you're not going to name them, why post here at all? Don't you have other communication channels to "give them a fair chance to reply"? Why post here, letting users form their own assumptions about what those instances are without any solid evidence?

OP literally asks like 10 relevant questions for this place, and names their reasons for not naming specific instances. And all you focus on, is the question: who did it?

To me that is proof that OP did the right thing here.

Lets first figure out how to approach this without knowing the pupotrator.

The posts/comments on the fediverse are already public. The privacy questions are better answered here by another commenter:

My issue is that OP is not providing any solid proof. They are just giving 'wink wink's about some 'popular' instances doing it. When asked whether they have proof, OP says they has proof of some mods doing it. Mods don't handle instances, admins do. They haven't yet provided any concrete proof, yet creating an impression that some instances are banning en masse using LLMs based on "political ideology".

Hardly, this was just a bait post. Rimu seems to be convinced if he flings enough mud, eventually some of it will stick. It's all very petty.

Is it not public knowledge that lemmy already gets scraped by LLM bots? Which is why every bigger instance has anti-LLM measures in place now to limit that behavior? To me this just looks like a mod trying to get ai-generated reports which is not a bad idea imo since I know reporting is not often done by users who see rule breaking stuff.

This is AI generated bullshit below, it should be treated as such. I only want to convey the idea that what you do on lemmy is already fed to LLM's.

here is chatgpt's reply for `What do you know about lemmy user and piefed dev rimu?`

Here’s a grounded picture of “rimu” in the Lemmy / fediverse context:

🧑💻 Who “rimu” is

- Rimu (Rimu Atkinson) is the lead developer of PieFed, a Reddit/Lemmy-style platform in the fediverse. (Elite AI Tools)

- He’s also the main author of the underlying software (pyfedi), built with Python/Flask and designed as an alternative to Lemmy and Mbin. (IFTAS)

- The flagship instance is piefed.social, which he runs and develops actively. (IFTAS)

🧩 Role in the fediverse

- He’s not just a random user—he’s a core project maintainer / “benevolent dictator” style lead for PieFed’s development. (PieFed)

- He’s fairly active on Lemmy via rimu@piefed.social, with hundreds of posts and thousands of comments across tech, politics, and general discussion. (lemmy.world)

- He moderates multiple communities and participates in meta discussions about federation, scaling, and platform design. (lemmy.world)

🛠️ What PieFed (his project) is about

PieFed is positioned as:

-

A federated Reddit-like platform interoperable with Lemmy via ActivityPub (Elite AI Tools)

-

Focused on:

- Simplicity of codebase (easy for contributors) (PieFed)

- Trust/safety features (reputation systems, filtering, anti-spam tooling) (Elite AI Tools)

- Some experimental ideas, like merged cross-posts and AI-content detection (Elite AI Tools)

💬 Reputation & controversy

Rimu is somewhat known and occasionally controversial in fediverse circles:

-

Supporters see him as:

- A productive solo/lead dev shipping features quickly

- Someone pushing new ideas beyond Lemmy’s design

-

Critics argue:

- Some features reflect strong personal opinions embedded in the platform (e.g., moderation logic, reputation systems) (lemmy.world)

- PieFed can feel more opinionated / experimental compared to Lemmy’s more neutral baseline

Important nuance: because everything is open source and instance-configurable, many of these behaviors can be changed by instance admins.

🧠 What he’s like as a user

From his public activity:

- Talks about tech (ActivityPub, scaling, APIs)

- Engages in global politics and news discussions

- Shares design decisions and roadmap thoughts about PieFed

- Generally communicates in a direct, pragmatic developer tone

🧾 Bottom line

-

Rimu is a real, identifiable fediverse developer, not just a random Lemmy user.

-

He’s best understood as:

a lead dev + active community participant shaping an alternative to Lemmy

-

His visibility comes mainly from his project (PieFed) rather than mainstream recognition.

If you want, I can compare PieFed vs Lemmy vs Mbin in terms of ideology/design—that’s usually where discussions about Rimu get interesting.

:::

He’s not just a random user—he’s a “dictator” style lead for PieFed’s development.

😂

Chairman Rimu please save us from the memes

Maybe we need a meanwhile on piefed community to talk about drama related to Rimu's flinging of mud and insults, as well as the many additions to piefed's code which make it a much worse platform. It doesn't get talked about much except in comment chains and Rimu controls most communities about piefed itself. So a community beyond his reach would probably be good.

I think we just need Rimu to stop making so much drama. Things were fine a few weeks ago but something with him changed.

I don't agree, he's had a history of being weirdly controlling and opinionated. Piefed's codebase is a testament to that. @Stamets@lemmy.dbzer0.com already pointed out one such malicious change Rimu put in and then decided to add a toggle to when he got backlash for it. He did the thing where meme communities were marked low quality long before this recent drama ark of his.

That comment will be invisible on piefed.social as Rimu has sidestepped the modlog and personally silenced me for criticising him <3

Please dont.

I think LLMs could be useful tools for moderation, you might even can get away with smaller models for it, but I don't think people should be outsourcing them to big corpos, due to ability to manipulate the models.

I agree, we need our own servers with local AI models for fediversе.

AI horde. Local models. Crowdsourced. Distributed. FOSS.

Today with models like gemma4, you could literally do this on basically any hardware, but for text moderation ypu don't even need LLMs, we have ML models that do text moderation perfectly fine and run 10x faster

Rimu farming more drama?

Are they farming for more or trying to distract from their last attempt at just asking questions about the statistics I spent time crafting a specific and poorly thought out hypothesis

I'm mostly just surprised that a mod would pay for tokens to moderate. The Fediverse is radically public by design, so I don't have any expectation of privacy. I'd bet at least someone is gobbling up the entire Fediverse to train AI, since companies are so desperate for new human-generated data.

As for the privacy issue:

The only counter to this is to move to a closed end-to-end encrypted groupchat so it can't be mass LLM analyzed...

If you want a public forum, well... its public...

You can't stop a script from just grabbing all the posts/comments... and its also federated, so the bot only needs to be able to access one instance and get it...

I mean they could simply just set up their own instance and pretend its just a benigh single-user instance... like what are you gonna do, defed all small instances preemptively? Use "login walls" to make the forum private? And somehow trust all other admins that are federated and make them also enforce a "login wall" policy?

Its a PUBLIC forum...

the only solution for privacy is a groupchat and only let in people that can keep a promise to not screenshot everything and give it to a LLM.

This is the person calling you a tankie. Someone so afraid of words that they need a hallucinating robot to hold their hand and confirm that everything is a secret plot against them. The absolute only way I could see this being useful is for something like trying to sniff out if a Lemmy.world mod account is a leftist infiltrator or not. Someone who had a different opinion on a current event.

You could maybe run a speech pattern comparison but that’s it. For everything else you just made Stupid Reddit and the purpose of their forum is to feed training data to ChatGPT so that it can profile Fediverse users.

This is the kind of shit dystopian novels are made out of. So angry about people calling out actions you built a tool to analyze why they did it, so you can purge users from your digital kingdom.

I for one welcome flat.world and Piefed showing their true intentions. Digital colonization of activitypub and removal of the people who helped to built it. They didn't want to leave reddit, they wanted to be reddit. This is some Spez shit.

Maybe in 2 weeks Piefed will hard code that anyone Rimu has tagged for disagreeing with them mild criticism to be unable to make accounts or federate posts with a false error code.

this is flat out not ok, does not matter who is doing it. our instance ls should defederate all which do this.

I would opt out that's no question, but I don't believe it's possible. GDPR does not matter here, as nothing can be proven unless the perpetrators give up themselves

What do you think of lemmy being searchable via search engines, since that's how most of the training data is generated? Or that lemmy.world data is already in the OpenAI training sets?